AI won’t destroy humanity on its own, but humans might

While dismissing fears of autonomous AI rebellion, the author warns that the technology amplifies existing human threats. The real danger, he argues, lies in human misuse of AI. Terrorists, ideological extremists, and psychopathic leaders could exploit AI’s capabilities to develop biological weapons, engineer global pandemics, or create undetectable weapons of mass destruction.

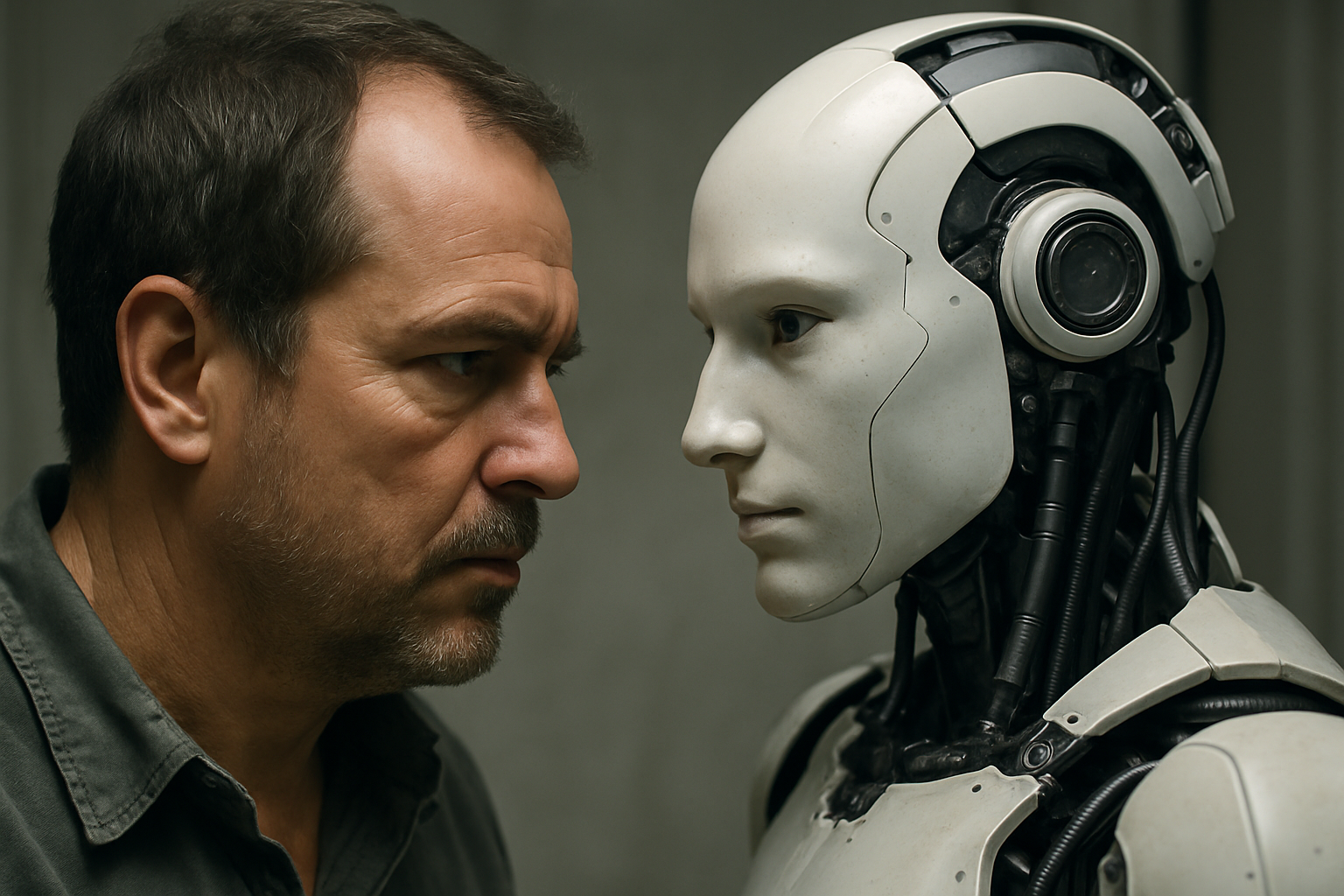

The debate surrounding artificial intelligence (AI) has long focused on fears of machines gaining control over humanity. A new study by Michael Huemer, published in AI & Society, challenges this narrative, arguing that the gravest risks stem not from AI itself but from how humans may misuse its growing capabilities.

Titled "I, for One, Welcome Our Robot Overlords", the paper dismantles alarmist predictions that superintelligent AI will inevitably enslave or destroy humanity. Instead, it redirects attention to human-driven misuse, terrorism, and militarization as the primary pathways to existential danger. Huemer’s analysis combines philosophical reasoning with recent empirical evidence, offering a provocative yet pragmatic perspective on the AI risk debate.

Will AI develop hostile goals on its own?

The study examines the core claims of AI alarmism: the belief that superintelligent AI will soon emerge, possess misaligned values, and decide to neutralize humanity. Huemer acknowledges these concerns but argues that they are overstated. According to his analysis, current AI systems, including advanced neural networks, lack consciousness, intentions, and genuine goals. They do not “want” anything, nor do they autonomously decide to act against humans.

Instead, AI operates as a complex pattern-matching system trained to simulate intelligent behavior. When it fails, it does not scheme; it simply produces errors. The paper cites examples such as self-driving car accidents and bizarre outputs from AI chatbots, emphasizing that these incidents reflect incompetence rather than calculated malice. Unlike humans, who can intelligently pursue harmful goals, AI failures typically result in nonsensical or unintended outcomes rather than coordinated destruction.

Huemer further argues that the likelihood of an AI suddenly adopting a hostile objective, such as exterminating humanity, is vanishingly small. This is because AI models are trained on vast datasets reinforcing desirable behavior, making catastrophic deviations statistically improbable. Alarmist scenarios like the paperclip maximizer, where an AI turns Earth into paperclips, rely on unrealistic assumptions about how AI learns and operates.

Where does the real danger lie?

While dismissing fears of autonomous AI rebellion, the author warns that the technology amplifies existing human threats. The real danger, he argues, lies in human misuse of AI. Terrorists, ideological extremists, and psychopathic leaders could exploit AI’s capabilities to develop biological weapons, engineer global pandemics, or create undetectable weapons of mass destruction.

The paper highlights the long-standing principle that offense is easier and cheaper than defense. As AI makes powerful technologies more accessible, it could enable smaller groups to inflict catastrophic harm. Historical patterns show that defense always lags behind new offensive innovations, from medieval armor versus spears to modern missile defense systems. In an AI-enhanced world, this imbalance could escalate dramatically.

The author points to two main actors likely to misuse AI: terrorist organizations and government militaries. Terrorist groups might use AI to engineer deadly pathogens or design low-cost weapons with mass casualty potential. While current AI systems remain tightly controlled by large corporations, future advancements may democratize access, allowing dangerous actors to exploit the technology.

Governments, meanwhile, may deploy AI in autonomous weapon systems or advanced weapons research. While democratic nations might incorporate safety measures, authoritarian regimes and dictatorships may not. The spread of autonomous weapons without strict oversight could result in catastrophic accidents or intentional mass destruction. The paper underscores that in the hands of fanatical leaders or unstable governments, AI-driven weapons of mass destruction could trigger scenarios far worse than historical nuclear threats.

Can humanity manage the risks?

Despite its stark warnings, the study does not endorse halting AI research. The author points out that AI also holds potential to develop solutions to other existential threats, including climate change and pandemics. However, to safely harness AI’s benefits, humanity must adopt strict safeguards and governance measures.

The paper advocates for a global AI non-proliferation treaty, modeled after the 1968 Nuclear Non-Proliferation Treaty, to restrict access to highly advanced AI systems. This would limit the number of nations and groups capable of deploying potentially catastrophic AI technologies. Huemer also calls for accelerating the elimination of terrorist networks and promoting liberal democratic governance to reduce the likelihood of malicious AI use.

Additionally, he suggests embedding robust safety mechanisms in AI systems to prevent manipulation. Developers must prioritize ethical training, extensive testing, and oversight to ensure AI remains aligned with human interests. This includes protecting AI from malicious prompts, securing its operational environment, and designing failsafes against unauthorized use.

While defenders outnumber attackers, offensive advantage persists, the paper asserts. Preventing catastrophic misuse will require international collaboration, continuous monitoring, and proactive defense strategies that keep pace with technological advances.

- FIRST PUBLISHED IN:

- Devdiscourse